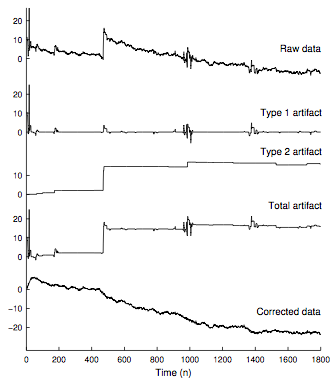

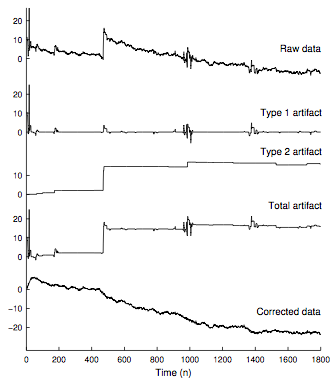

Abstract: This paper addresses the suppression of transient artifacts in signals, e.g., biomedical time series. To that end, we distinguish two types of artifact signals. We define 'Type 1' artifacts as spikes and sharp, brief waves that adhere to a baseline value of zero. We define 'Type 2' artifacts as comprising approximate step discontinuities. We model a Type 1 artifact as being sparse and having a sparse time-derivative, and a Type 2 artifact as having a sparse time-derivative. We model the observed time series as the sum of a low-pass signal (e.g., a background trend), an artifact signal of each type, and a white Gaussian stochastic process. To jointly estimate the components of the signal model, we formulate a sparse optimization problem and develop a rapidly converging, computationally efficient iterative algorithm denoted TARA ('transient artifact reduction algorithm'). The effectiveness of the approach is illustrated using near infrared spectroscopic time-series data.

Ivan W. Selesnick, Harry L. Graber, Yin Ding, Tong Zhang, Randall L. Barbour

Transient Artifact Reduction Algorithm (TARA) Based on Sparse Optimization

IEEE Trans. on Signal Processing

vol. 62, no. 24, pp. 6596-6611, Dec. 15, 2014

doi: 10.1109/TSP.2014.2366716

IEEE Xplore

Download preprint: tara_2014.pdf

Download Matlab software: TARA_software.zip

This research was supported by the NSF under Grant No. CCF-1018020, the NIH under Grant Nos. R42NS050007, R44NS049734, and R21NS067278, and by DARPA project N66001-10-C-2008.

Any opinions, findings, and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the National Science Foundation or other sponsors.